The Potential Impact of Simple Adjustments

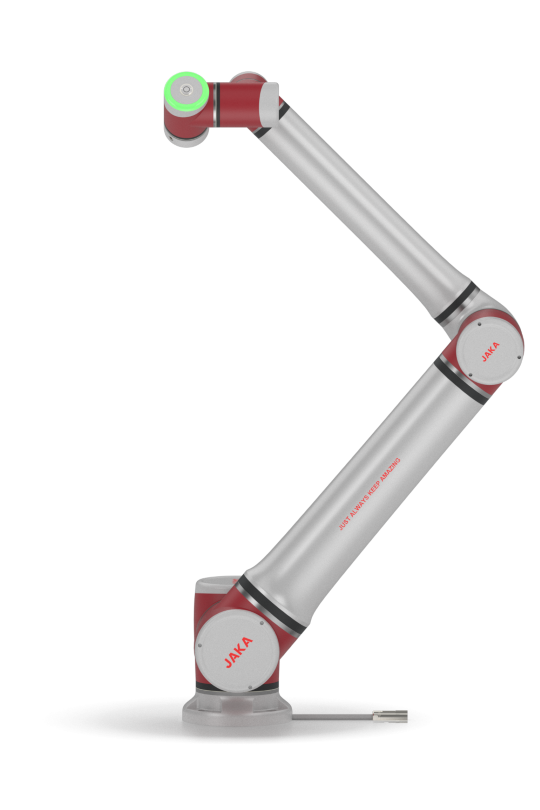

Imagine a factory floor where productivity could be increased with just a few adjustments to machinery. According to a recent study, implementing minor changes in automation processes can elevate efficiency by up to 30%. This is particularly true for the 6 axis robot arm, which can be fine-tuned to enhance its capabilities dramatically. How many manufacturers are missing out on these improvements right now?

Understanding 6 Axis Robot Arms

What’s fascinating about the programmable robotic arm is its versatility. These arms are designed to perform a range of tasks from assembly to packaging with precision. Yet, many users overlook the potential flaws in traditional configurations, which can limit application effectiveness. For example, if you don’t properly calibrate the arm’s joints, you might find that it struggles with either speed or accuracy — which can be frustrating in a high-stakes environment. (I’ve seen it happen firsthand — during a live assembly demonstration, a miscalibrated robot arm slowed the entire process down.)

Exploring Pain Points in Automation

Over the years, I’ve learned that hidden pain points often emerge in automated workflows. Manufacturers might invest in top-notch equipment, but neglecting the need for ongoing calibration and maintenance can lead to suboptimal performance. I firmly believe that regular assessments are crucial for unlocking the full potential of your 6 axis robot arm. Users might not realize, for instance, that outdated control software can lead to significant inefficiencies, sometimes costing businesses thousands of dollars in operations. If only they knew — imagine how different outcomes could be.

What’s Next for 6 Axis Technology?

Looking ahead, the future of the programmable robotic arm is bright. As technology evolves, so do the capabilities offered by these machines. Enhanced sensors, adaptive algorithms, and real-time feedback systems promise to take automation to the next level. Investors and entrepreneurs are shifting their focus to this sphere. I speculate that in the next 5 years, we’ll see an increase in modular designs that allow for even easier upgrades and modifications — aligning with changing production needs.

Key Takeaways for Evaluation

So, what lessons can we draw from all this? Firstly, continuous performance evaluations are key to maximizing investment returns. Secondly, embracing new technologies can open doors to previously unattainable efficiency levels. And thirdly, finding a reliable brand can lead to smoother operational transformations. In the world of JAKA, for instance, customer support and product innovation play a massive role in defining the user experience. If you’re looking to elevate your automated processes, remember to invest thoughtfully in your tools and strategies — after all, a little effort can yield tremendous results.

In summary, as I reflect on my experiences, it’s clear that small modifications can lead to significant transformations in performance. The 6 axis robot arm is not just a tool; it’s a gateway to enhanced efficiency when used to its full potential. With brands like JAKA leading the way, we can genuinely look forward to a future where robotics and automation are seamlessly integrated into our lives.