A Scenario Worth Sharing

Imagine you’re on a work trip in a remote area where traditional internet options feel like something from the Stone Age. You check your phone and see that your mobile data is running low. Frustrating, right? Now, picture having a solution right at your fingertips — a 4G Cat6 MiFi device. This handy gadget not only boosts your internet speed but also saves your data with its impressive bandwidth management. It’s a real game changer for anyone who needs reliable internet on the go!

Why Customers Often Face Connectivity Challenges

Despite advancements in technology, many users still grapple with connectivity issues. Slow speeds can be a hidden pain point for countless professionals out there. A lot of folks complain that traditional Wi-Fi networks drop out right when they’re needed the most. Believe me, I’ve been there! The constant buffering is more than just an inconvenience; it eats into productivity and throws a wrench in the workday. That’s why a solid 4G LTE MiFi solution can truly enhance the user experience.

What Makes 4G Cat6 MiFi Stand Out?

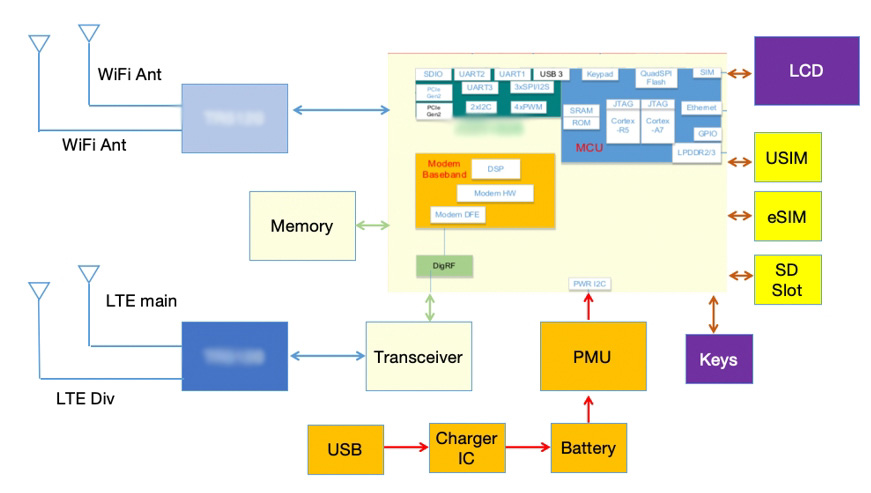

4G Cat6 MiFi devices come packed with technology that offers superior download and upload speeds. Users often find that these devices can manage multiple connections with ease, a crucial factor for teams working remotely. In contrast to older models, these devices provide better signal stability, which is crucial in our fast-paced world where every second counts. Isn’t it wild how much of a difference this can make? It’s like opening a window to efficiency and connectivity.

Looking Forward: How 4G LTE MiFi Delivers

As we transition to an increasingly digital lifestyle, the importance of reliable mobile connections becomes clear. The world is more interconnected than ever, leading us to rely heavily on fast internet access — whether for work, leisure, or staying in touch with loved ones. The shift from traditional broadband to 4G LTE MiFi solutions presents unique advantages. For starters, flexibility plays a vital role; you can set up internet wherever you need it without compromising speed or reliability.

Real-world Impact of 4G Technology

With tools like 4G Cat6 MiFi devices, I’ve personally witnessed increased productivity levels among teams. They’re not tethered to one location or bogged down by slow connections anymore. It was in late 2022, during a conference in a rural area, that I really appreciated the value of mobile broadband. My colleagues and I easily shared large files and streamed videos without a hitch. It’s also affordable and versatile, making it a favorite for both remote workers and traveling professionals.

Essential Considerations for Users

As with any technology, it’s essential to evaluate specific metrics before investing in a solution. Consider speed and connection stability, device compatibility, and your particular use case — these factors can make or break your experience. Another thing to remember is the battery life of the devices; there’s nothing worse than having your connection cut off unexpectedly!

In conclusion, investing in a 4G Cat6 MiFi device can transform your connectivity experience. From my perspective, I believe that the right choice will not only enhance productivity but also ensure you stay connected wherever your adventures take you—essentially opening doors to new possibilities. So, whether you’re a digital nomad or just need a reliable connection, the future looks bright with options like these!

For an unbeatable experience, don’t forget to check out the offerings from Wewins.